问题: 产品应用中,使用了TCP长连接+自定义PDU的方式来提速业务处理,其中负载均衡使用NetScaler,并采用最小连接数优先原则,这样能保证每次新建连接时,都按负载均衡的趋势去建立。但是在使用硬件负载均衡器处理这种场景时,难免缺乏一定的灵活性和自由度,分析其缺点,主要有以下:

(1)正常使用中,服务方某台服务down或者重新部署时,这个机器所维持的长连接,被”驱赶”到其他服务机器。但是这台机器重启后,谁能用到这台机器?

(2)需要扩容时,需要修改netscaler上的配置,增加一台,修改一次。

(3)单点瓶颈,所有请求经由负载局衡器,虽然请求量的无限增加,势必有单点瓶颈。

针对存在的问题,当前的解决办法是:

(1)每建立一个连接,存入数据库

(2)服务发现方定时轮巡所有连接情况,发现不均衡,通知消费方调节。

分析:

总结以上问题,实际上是一个服务发现与注册的问题,而采用当前的解决方法,具有以下问题:

(1)只要是轮巡,基本都是可有优化的地方:轮训时间长,则效果不及时,轮巡时间短,实际上一年也发生不了太多次,浪费系统资源

(2)消费方和服务方耦合性提高,因为服务方会直接通知消费方重建连接来均衡。

解决:

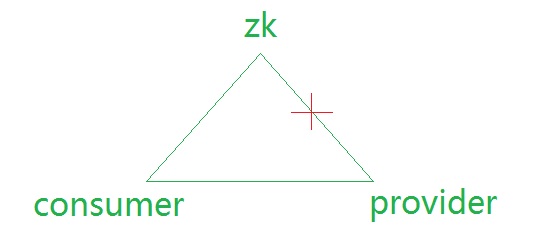

实际上,这是一个“服务发现与注册”的典型案例,可以采用zookeeper或者spring cloud的Eureka等解决,这里拿zookeeper来展示下,如何解决这个问题:

(1)服务方启动时,创建一个服务“根节点”(假设不存在),然后注册一个子节点到这个根节点

(2)消费方监听“根节点”的“子节点”变化。

再说说几个要点:

(1)创建根节点时,需要检查是否已经存在, 可以自己实现(直接创建然后忽略NodeExisted异常,或者提前判断是否存在再创建),也可以直接使用下面方法:

ZKPaths.mkdirs(zooKeeper, "/Service");

(2)创建子节点使用临时节点方式:CreateMode.EPHEMERAL, 这样就可以将服务的生命周期(session)和注册的信息生命周期绑定起来。

String nodePath = ZKPaths.makePath("Service", localIp);

zooKeeper.create(nodePath , nodeInfo, ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.EPHEMERAL);

(3) 设置session timeout时间

临时节点与session timeout息息相关,假设这个节点忽然断电,则zookeeper何时去删除这个临时节点而通知到消费方此节点已不可用,所以需要设置下session timeout来控制,设置的越短,检查(意外)失效越及时,但是如果过短,考虑网络波动,则可能被zookeeper误认为session失效,所以过大过小,都不合适,默认zookeeper使用的时间是2*ticktime(4)到20*ticktime(40s),即如果客户端设置在这个范围,则生效,如果设置的过小,所以最小值2*ticktime,如果设置过大值,使用最大值20*ticktime。

客户端设置:

CuratorFramework curatorFramework = CuratorFrameworkFactory.builder().connectString("10.224.2.116:2181")

.retryPolicy(retryPolicy)

.connectionTimeoutMs(3000)

.sessionTimeoutMs(5000) //设置5s,则可以容忍5s网络波动,同时意外down机,也需要5s才能移除这个节点。

.build();

server处理使用的默认值:

public void setMinSessionTimeout(int min) {

this.minSessionTimeout = min == -1 ? tickTime * 2 : min;

LOG.info("minSessionTimeout set to {}", this.minSessionTimeout);

}

public void setMaxSessionTimeout(int max) {

this.maxSessionTimeout = max == -1 ? tickTime * 20 : max;

LOG.info("maxSessionTimeout set to {}", this.maxSessionTimeout);

}

server处理:

int minSessionTimeout = getMinSessionTimeout();

if (sessionTimeout < minSessionTimeout) {

sessionTimeout = minSessionTimeout;

}

int maxSessionTimeout = getMaxSessionTimeout();

if (sessionTimeout > maxSessionTimeout) {

sessionTimeout = maxSessionTimeout;

}

cnxn.setSessionTimeout(sessionTimeout);

(4)重连

假设在以上session timeout内发生网络波动,重新连接后,session仍然生效,且是同一个,这样节点并不会移除,并不会通知到服务方这个节点不可用,服务预期,但是假设网络不可达超过session timeout时间,则session绑定的节点会被server移除,这个时候,假设网络恢复,连接重新建立(zkclient会后台一直重试断掉的连接),并没有人去重新注册这个服务节点,所以需要一个重新注册的逻辑:

需要使用curatorFramework封装的功能(关系上zkclient -> curatorClient – > curatorFramework)

curatorFramework.getConnectionStateListenable().addListener(new ConnectionStateListener() {

@Override

public void stateChanged(CuratorFramework arg0, ConnectionState connectionState) {

if(connectionState == ConnectionState.RECONNECTED){ //判断是否发生了重新连接且成功状态

//重新注册服务节点

}

}

});

(5)提供更多的信息

一般提供一个ip信息就够用了,但是为了扩展需要,可以将子节点的信息设计成json格式等,提供更多的信息,例如payload, health check信息。考虑一种case(参考下图):假设zk和服务方的网络断了,zk和消费方以及消费方和服务方网络都没有断,实际上这种情况下,服务仍然可以继续服务,但是zk因为网络问题会将服务方节点全部删除,这个时候,可以让消费方使用节点中的health check信息去确认下,是否真的不可达了,以应对这种场景,但是这种方案需要事先规划好,假设一个服务就是不想被访问,所以主动移除了,但是使用Health check肯定还是可以正常工作的,所以整体设计要考虑各种场景,但是不管如何,提供更多的信息,才方便以后的扩展和决策。

(6)watch是一次性的

zk的监听是一次性的,即触发处理后,下次再发生变化,并不会触发处理。所以这要求在事件处理后重新注册watch,

public static class ReceiveNodesChangeWatcher implements Watcher{

private ZooKeeper zooKeeper;

private String servicePath;

@Override

public void process(WatchedEvent event) {

try {

List<String> nodes = zooKeeper.getChildren(servicePath, this); //重新注册下

} catch (Exception e) {

}

}

}

总结:

经过以上设计就可完成一个服务发现与注册的案例,解决了最开始提出的问题。将路由工作从netscaler移到消费方来处理。而zookeeper仅仅在节点变动时,通知变化。增加节点时,0配置,自动使用上,比较智能!